Task-driven Webpage Saliency

City University of Hong Kong

Abstract

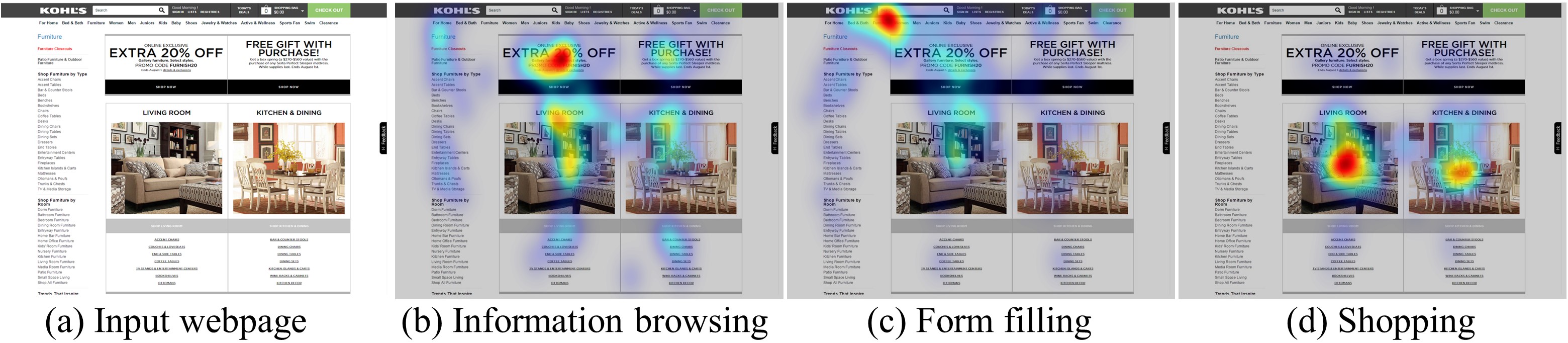

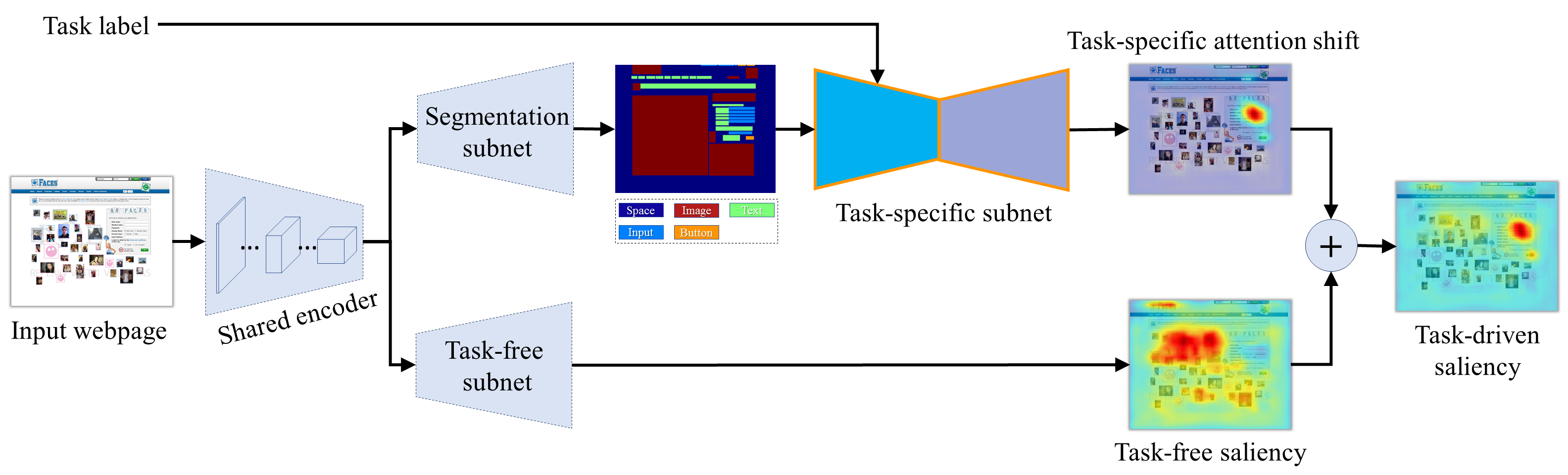

In this paper, we present an end-to-end learning framework for predicting task-driven visual saliency on webpages. Given a webpage, we propose a convolutional neural network to predict where people look at it under different task conditions. Inspired by the observation that given a specific task, human attention is strongly correlated with certain semantic components on a webpage (e.g., images, buttons and input boxes), our network explicitly disentangles saliency prediction into two independent sub-tasks: task-specific attention shift prediction and task-free saliency prediction. The task-specific branch estimates task-driven attention shift over a webpage from its semantic components, while the task-free branch infers visual saliency induced by visual features of the webpage. The outputs of the two branches are combined to produce the final prediction. Such a task decomposition framework allows us to efficiently learn our model from a small-scale task-driven saliency dataset with sparse labels (captured under a single task condition). Experimental results show that our method outperforms the baselines and prior works, achieving state-of-the-art performance on a newly collected benchmark dataset for task-driven webpage saliency detection

Architecture

Citation

@inproceedings{zheng2018taskWebSaliency,

author={Quanlong Zheng, Jianbo Jiao, Ying Cao, Rynson Lau},

title={Task-driven Webpage Saliency},

booktitle = {Proceedings of European Conference on Computer Vision (ECCV)},

month = {September},

year = {2018}

}